- Highlights of October 2023 Spam Update – Google Latest SEO Updates

- Google’s Official Announcement: Google Latest SEO Updates

- Previous Google Spam Updates

- Key Highlights Related to Google Core Updates

- Understanding the Google Core Update

- What Does Google Consider Spam?

- How to Prevent from October 2023 Google Core Spam Update?

- How Reliqus SEO Services Can Help Prevent Your Website from Being Affected by Spam Updates?

Google has recently announced its upcoming October 2023 Spam Update, and it’s generating quite a buzz in the SEO community. This core algorithm update is designed to combat spammy websites and provide users with more valuable and relevant search results.

Highlights of October 2023 Spam Update – Google Latest SEO Updates

Google has officially confirmed the rollout of its latest core algorithm update, known as the “October 2023 Core Update.” This is the third core update of the year 2023, following the March and August updates.

The changes brought about by this update are expected to take several weeks to fully complete. During this time, it is normal for websites to experience fluctuations in their search rankings.

- Google has launched the highly anticipated October 2023 Core Update, aiming to clean up spam and improve search results.

- The update process may take up to two weeks to complete.

- Rankings may experience some fluctuations during the next few weeks.

- The main aim of this update is to enhance the search experience by providing more helpful and reliable results for searchers.

Google’s Official Announcement: Google Latest SEO Updates

Google has officially announced the release of the October 2023 core update on X! They also stated that the update process may take up to two weeks to complete. Once the update is finished, Google will update their Search Status Dashboard to provide users with the latest information on the update.

Previous Google Spam Updates

Over the past couple of years, Google has been committed to combating spam and improving the search experience. Here’s a timeline and our coverage of previous spam updates:

- December 14, 2022: Released the December 2022 link spam update. The rollout was complete as of January 12, 2023 [Reference]

- October 19, 2022: Released the October 2022 spam update. The rollout was complete as of October 21, 2022. [Reference]

- November 3, 2021: Released the November 2021 spam update. The rollout was complete as of November 11, 2021 and seemed significant. [Reference]

- July 26, 2021: Released the July 2021 link spam update. The rollout was complete as of August 24, 2021. [Reference]

- June 28, 2021: Released the second part of the June 2021 spam update. The rollout was completed later that same day. [Reference]

- June 23, 2021: Released the first part of the June 2021 spam update. The rollout was completed later that same. [Reference]

Key Highlights Related to Google Core Updates

Google typically releases several core updates throughout the year, each with its own purpose and impact on search results. Here are some key points to understand about Google’s core algorithm updates:

Frequency: Google regularly updates its algorithms to improve the quality and relevance of search results. These updates can occur multiple times in a year, with some updates being more significant than others.

Impact: Core algorithm updates can have a significant impact on search rankings and visibility. Websites may experience fluctuations in rankings, both positive and negative, as Google adjusts its algorithms to better understand and prioritize relevant content.

Recovery: If your website is negatively affected by a core update, it’s important to analyze and understand the changes made to the algorithm. By improving the quality of your content and addressing any issues identified by the update, you can work towards recovering lost rankings.

Helpful Content: Google’s core updates are designed to reward websites that provide helpful, informative, and high-quality content to users. Focus on creating content that addresses the needs and interests of your target audience, and ensure it is relevant, comprehensive, and well-researched.

User Experience: User experience plays a crucial role in Google’s algorithms. Websites that offer a positive user experience, with fast loading times, easy navigation, and mobile-friendliness, are more likely to be rewarded with higher search rankings.

Expertise, Experience, Authority, and Trustworthiness (E-A-T): Google values websites that demonstrate expertise, experience, authority, and trustworthiness in their respective fields. Building a strong reputation, showcasing credentials, and providing accurate information can contribute to higher rankings.

Continuous Improvement: Google’s core algorithm updates reflect its commitment to continuously improving search results. By staying updated on the latest algorithm changes and optimizing your website accordingly, you can adapt to evolving search trends and maintain or improve your rankings.

Understanding the Google Core Update

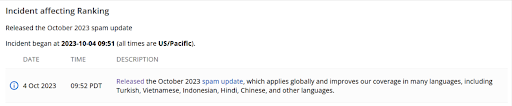

Google has recently rolled out an update to its spam detection system on October 4, 2023. This update specifically targets spam across various languages, addressing concerns raised by the global community.

In an effort to create a cleaner and more reliable search environment, Google aims to combat cloaking, hacking, auto-generated, and scraped spam in languages such as Turkish, Vietnamese, Indonesian, Hindi, Chinese, and more.

Keep in mind that it may take a few weeks for the update to fully roll out, so be patient as you notice changes in search results.

What Does Google Consider Spam?

Google outlines what it doesn’t allow in its spam policies documentation. Here are some of the things Google considers spammy or misleading:

1. Cloaking

The update addresses issues related to websites that display different content to users and search engines, a tactic commonly known as cloaking.

Cloaking is a deceptive and unethical SEO (Search Engine Optimization) technique in which a website displays different content to search engine crawlers (like Googlebot) than what it shows to human visitors.

The purpose of cloaking is to manipulate search engine rankings and improve a website’s visibility in search results. It is considered a violation of search engine guidelines, and websites engaging in cloaking can face penalties, including being removed from search engine indexes.

Here’s how cloaking typically works:

- User-Agent Detection: Cloaking usually involves detecting the user-agent of the visitor. User-agent is a piece of information sent by a browser to the web server and indicates the type and version of the browser. Search engine crawlers have specific user-agents, and website owners can identify them.

- Serving Different Content: When the website identifies that a request is coming from a search engine crawler, it serves one set of content, often optimized with keyword stuffing and other SEO techniques, with the goal of ranking higher in search results. When the request is from a regular user, it serves a different set of content that may be more user-friendly but is often not optimized for search engines.

Cloaking is problematic for several reasons:

- Deception: It deceives both search engines and users by presenting different content to each group. This undermines the trustworthiness of search results.

- Manipulation: Cloaking is used to manipulate search engine rankings, giving websites an unfair advantage over others that adhere to search engine guidelines.

- Poor User Experience: When users click on search results and find that the actual content is different from what they expected based on the search snippet, it results in a poor user experience.

- Penalties: Search engines like Google have sophisticated algorithms and systems in place to detect and penalize cloaking. Penalties can include a drop in rankings, removal from search indexes, or even bans.

Consequences of Cloaking:

- SEO Penalties: Search engines actively penalize websites that engage in cloaking by reducing their search rankings or removing them from search results.

- User Trust: Cloaking undermines trust in search engine results and can lead to frustration among users who do not find the content they were looking for.

- Reputation Damage: Websites associated with cloaking may face reputational damage, as they are seen as engaging in deceptive practices.

Preventing and Addressing Cloaking:

- Ethical SEO: Focus on ethical and white-hat SEO practices, which involve providing valuable, high-quality content to both search engines and users.

- Content Consistency: Ensure that the content you serve to search engines is the same as what you provide to human visitors.

- Regular Audits: Conduct regular audits of your website to check for any signs of cloaking or other deceptive SEO practices.

- Stay Informed: Keep up to date with search engine algorithms and guidelines to ensure compliance.

To maintain a good online reputation and adhere to ethical SEO practices, it’s essential to avoid cloaking and focus on providing valuable, relevant, and consistent content to both search engines and users. High-quality content, proper on-page SEO, and white-hat link-building strategies are more effective and sustainable ways to improve your website’s search engine rankings.

2. Hacked Spam

Websites compromised by malicious actors will be under increased scrutiny to ensure a more secure online environment.

Hacked spam, also known as website hacking or SEO spam, refers to the malicious activity in which unauthorized individuals or bots gain access to a website and inject spammy or malicious content for various purposes. This type of spam can harm a website’s reputation, compromise its security, and negatively impact its search engine rankings. Here’s what you need to know about hacked spam:

How Hacked Spam Occurs:

- Vulnerabilities: Hackers exploit vulnerabilities in a website’s security, which can include outdated software, weak passwords, or unpatched security flaws.

- Malicious Code: Once inside, hackers insert spammy or malicious code into the website’s files or database.

- Unauthorized Links: They often add links to unrelated or low-quality websites to manipulate search engine rankings or promote their own agenda.

Types of Hacked Spam:

- Pharma Hacked Spam: Promotes pharmaceutical products or illegal drugs.

- Casino or Gambling Hacked Spam: Promotes online casinos or gambling sites.

- SEO Spam: Attempts to boost the search engine rankings of other websites through the injected links.

- Malware Distribution: Can serve as a platform for distributing malware to site visitors.

Consequences of Hacked Spam:

- SEO Penalties: Search engines like Google can penalize hacked websites, leading to a drop in rankings or removal from search results.

- Reputation Damage: Hacked spam can damage a website’s reputation and deter visitors.

- Legal Issues: Depending on the nature of the spam, legal issues may arise.

- Security Risks: The website may remain vulnerable to further attacks, potentially compromising sensitive data.

Preventing and Addressing Hacked Spam:

- Regular Updates: Keep your website software, plugins, and themes up to date to minimize vulnerabilities.

- Strong Passwords: For all accounts connected to your website, use solid, one-of-a-kind passwords.

- Security Plugins: Implement security plugins or services to monitor and protect against hacking attempts.

- Regular Scans: Routinely scan your website for malware and suspicious code.

- Backup and Recovery: Maintain regular backups of your website to restore it in case of a breach.

- Webmaster Tools: Use Google Search Console (formerly Webmaster Tools) to monitor your site for security and indexing issues.

- Professional Assistance: If your site is compromised, seek help from professionals experienced in cleaning and securing hacked websites.

Dealing with hacked spam requires swift action to minimize damage and restore your website’s integrity. It’s crucial to take website security seriously and proactively protect your site against potential threats.

3. Auto-Generated Spam

Content created by automated processes, often lacking value or relevance, will face stricter measures to prevent its proliferation.

Auto-generated spam, also known as auto-generated content (AGC) or spammy autogenerated content, refers to low-quality, often nonsensical, or irrelevant content that is automatically generated by computer programs or bots rather than being created by human authors. These types of spammy content are typically created to manipulate search engine rankings, generate ad revenue, or promote dubious products or services. Here’s more information about auto-generated spam:

How Auto-Generated Spam Occurs:

- Automated Programs: Hackers or spammers use automated scripts or programs to generate vast amounts of content quickly.

- Scraping: Some auto-generated spam is created by scraping existing content from legitimate websites and then slightly modifying it to appear unique.

- Keyword Stuffing: Auto-generated content often involves excessive keyword stuffing in an attempt to improve search engine rankings.

Characteristics of Auto-Generated Spam:

- Poor Quality: The content is typically of low quality, with nonsensical sentences, grammatical errors, and often irrelevant information.

- Lack of Value: Auto-generated content rarely provides any valuable information or insights to the reader.

- Repetitive: It may consist of repetitive blocks of text, simply changing keywords or phrases while keeping the overall structure similar.

- Irrelevant Links: Auto-generated spam often includes links to unrelated or potentially harmful websites.

Consequences of Auto-Generated Spam:

- SEO Penalties: Search engines like Google actively penalize websites that feature auto-generated spam by reducing their search rankings or removing them from search results.

- User Experience: Auto-generated spam can result in a poor user experience, as visitors often encounter irrelevant or low-quality content.

- Reputation Damage: It can damage the reputation of a website or online platform that is associated with spammy content.

- Legal Issues: Auto-generated spam can also lead to legal issues, particularly if it involves copyright violations or fraudulent activities.

Preventing and Addressing Auto-Generated Spam:

- Content Moderation: Regularly review and moderate user-generated content if your website allows user contributions.

- Captchas and Verification: Implement captchas and other user verification methods to prevent automated submissions.

- Secure Your Website: Ensure that your website’s security is robust to prevent unauthorized access and spammy content injection.

- Use SEO Best Practices: Focus on creating high-quality, original, and valuable content that adheres to SEO best practices.

- Monitor for Spam: Regularly monitor your website for signs of auto-generated spam, such as sudden increases in low-quality content.

Dealing with auto-generated spam requires a proactive approach to prevent it from infiltrating your website in the first place and a vigilant effort to detect and remove any existing spammy content. Search engines are committed to providing users with high-quality, relevant content, and they actively penalize websites associated with spam or low-quality content.

4. Scraped Spam

Instances where content is copied and pasted from other sources with minimal added value will be targeted, promoting original and authentic content.

Scraped spam, also known as content scraping or web scraping spam, refers to the practice of copying or “scraping” content from other websites without permission and republishing it on one’s own website or platform.

This content is typically used to generate traffic, ad revenue, or backlinks without providing any unique or original value to users. Scraped spam is generally considered unethical and can lead to legal issues and penalties from search engines. Here’s more information about scraped spam:

How Scraped Spam Occurs:

- Automated Scraping: Scrapers use automated tools or bots to extract content from other websites, including text, images, and even entire articles.

- Republishing: The scraped content is often republished on the spammer’s website with little to no modification, making it appear as if it’s original.

- Monetization: Spammers may use scraped content to generate ad revenue through ads, affiliate marketing, or other monetization methods.

Characteristics of Scraped Spam:

- Lack of Attribution: Scraped content rarely attributes the original source or author, presenting it as if it were created by the spammer.

- Duplicate Content: Since it’s copied from other sources, scraped content is often duplicate and offers no unique value.

- Irrelevant Content: Spammers may scrape content unrelated to their website’s niche or audience to increase the quantity of content.

Consequences of Scraped Spam:

- Legal Issues: Content scraping without proper attribution or permission can lead to copyright violations and legal action by the original content creators.

- SEO Penalties: Search engines like Google penalize websites that feature scraped content by reducing their search rankings or removing them from search results.

- Reputation Damage: It can harm the reputation of the website or platform associated with scraped content, as it is seen as untrustworthy and unoriginal.

- Poor User Experience: Users often encounter the same content across multiple websites, resulting in a poor user experience.

Preventing and Addressing Scraped Spam:

- Copyright and Licensing: Understand and respect copyright laws and licensing agreements. Only use content with proper attribution or permission.

- Monitor for Scraped Content: Regularly monitor your website for signs of scraped content by using plagiarism detection tools or manual checks.

- Unique and Valuable Content: Focus on creating original, high-quality, and valuable content that provides a unique perspective or information to your audience.

- Report Scraping: If you discover scraped content from your website on another site, you can report it to the hosting provider or take legal action if necessary.

It’s essential to follow ethical content creation practices and respect the intellectual property rights of others to maintain a positive online presence and avoid legal and SEO consequences associated with scraped spam.

Google’s guidelines also advise against overly aggressive commercial tactics like false claims and misrepresenting products or services. The main goal of websites should be to give users an honest, transparent, and good-faithful experience.

How to Prevent from October 2023 Google Core Spam Update?

Google frequently updates its search algorithms to improve the quality of search results and combat spam. To stay ahead and ensure your website is not negatively affected by algorithm updates, you can follow these general best practices:

- Create High-Quality Content: Google’s primary goal is to provide users with valuable and relevant content. Focus on creating high-quality, original content that answers user queries and provides value.

- Optimize On-Page SEO: Pay attention to on-page SEO elements, such as title tags, meta descriptions, headings, and keyword usage. Make sure your pages are well-structured and easy to navigate.

- Mobile Friendliness: Ensure your website is mobile-friendly. Google prioritizes mobile-first indexing, so your site should provide a good user experience on mobile devices.

- Page Speed: Speed matters. Google uses page speed as a ranking factor. Optimize your website’s performance to ensure fast loading times.

- Secure Your Website: Implement HTTPS to secure your website. Google gives preference to secure sites in its search results.

- Quality Backlinks: Focus on acquiring high-quality backlinks from authoritative websites in your sector. Avoid low-quality or spammy backlinks.

- User Experience (UX): Provide a great user experience on your website. This includes easy navigation, clear calls to action, and a clean design.

- Avoid Black Hat SEO: Stay away from black hat SEO techniques, such as keyword stuffing, cloaking, and link schemes. These can lead to penalties.

- Regular Content Updates: Maintain current and engaging content. Regularly update and refresh existing content to maintain its relevance.

- Monitor Google’s Updates: Keep a close eye on official Google Webmaster Central Blog and other industry news sources for updates and algorithm changes. You can modify your approach by being aware of these changes.

- Use Google Search Console: Monitor your website’s performance in Google Search Console. It provides valuable insights into how Google sees your site and can alert you to issues.

- Quality Assurance: Regularly audit your website for any issues, such as broken links, duplicate content, and crawl errors.

- Engage with Users: Interact with your audience through social media, comments, and forums. Building a strong online community can improve your site’s reputation.

- Local SEO: If you run a local business, you should optimize your website for local search by setting up a Google My Business profile and obtaining reviews.

- Be Patient: Sometimes, it takes time for changes to reflect in search rankings. When pursuing SEO, be persistent and patient.

Remember that SEO is an ongoing process, and it’s important to adapt to changes in search algorithms and user behavior. While these practices can help you maintain a strong online presence, it’s essential to stay informed about any specific updates or guidelines Google may release in the future.

How Reliqus SEO Services Can Help Prevent Your Website from Being Affected by Spam Updates?

Looking for expert assistance in implementing these SEO best practices and staying ahead of Google’s algorithm updates? Reliqus is here to help! Our team of experienced professionals specializes in optimizing websites for search engines, ensuring you not only meet but exceed the latest SEO standards. With our personalized strategies and data-driven approach, we can enhance your online presence, drive organic traffic, and boost your rankings. Stay competitive and safeguard your website’s performance with Reliqus SEO Services today.

Feel free to contact us for a Free Consultation and take your SEO efforts to the next level!

Conclusion

The October 2023 Google Core Spam Update signals a shift towards a more refined, user-centric search experience. By targeting multilingual spam and specific spam types, the tech giant is working to create a more reliable and trustworthy internet for users across the globe. As the update unfolds over the coming weeks, users can look forward to cleaner search results and an internet landscape less plagued by spam.